On March 28th 2024, the Office of Management and Budget (OMB) issued the following memorandum—”Advancing Governance, Innovation, and Risk Management for Agency Use of Artificial Intelligence”—that represents a pivotal milestone for federal agencies and its data leaders. (Read the memorandum)

This directive compels agencies to swiftly enact a number of specific AI management protocols, including:

- Appointing a Chief Data and AI Officer (CDAIO) within 60 days

- Establishing an AI Governance Board within the same timeframe

- Developing and submitting a compliance plan within 180 days

- Creating an annual AI use case inventory

- Formulating a comprehensive AI strategy within 365 days for CFO Act agencies

If you’re in a data or AI leadership position at a federal agency, and you’re feeling an overwhelming sense of urgency, we want to help you navigate the new requirements.

Advancing responsible AI innovation while minimizing risk

At its core, the Memorandum not only mandates structural changes but also aims to propel forward-thinking strategies within federal agencies to harness AI innovation while managing its risk.

Certainly, the need for responsible AI governance, and the risk to citizens, is real. Recently, a major retailer was banned by the Federal Trade Commission (FTC) from using AI facial recognition technology after it was found to have deployed the technology without proper customer disclosure or consent, violating privacy norms.

The OMB Memorandum emphasizes the need for transparency, accountability, and robust governance frameworks to manage AI risks and ensure safe and compliant practices in AI applications.

It directs agencies to focus on several pivotal areas to ensure AI’s beneficial integration into government operations, including:

- Developing AI strategies that foster innovation: Agencies are encouraged to craft forward-looking strategies that not only leverage AI for operational improvements but also inspire cutting-edge applications that set new standards in public service

- Removing barriers to the responsible use of AI: To identify and dismantle the legal, procedural, and technological obstacles that hinder the ethical deployment of AI technologies, agencies are directed to create a streamlined pathway for AI projects that adhere to ethical standards and promote public trust

- Bolstering AI talent and promoting sharing and collaboration: By investing in talent development and fostering an environment of collaboration, agencies can cultivate a rich ecosystem of AI expertise. This includes enhancing skills across the workforce and facilitating knowledge exchange both within and between agencies to spur collective progress

- Defining risks and establishing safe AI practices: To clearly identify potential risks associated with AI applications—from privacy concerns to decision-making biases—and establish robust practices to mitigate these risks, agencies must ensure their AI systems operate within safe and ethical parameters, maintaining the integrity and trustworthiness of public services

Together, these directives aim to shape a government landscape where AI not only optimizes agency functions but does so with an emphasis on responsibility, ethical integrity, and collaboration. This balanced approach seeks to safeguard public interest while driving innovation, setting a global standard for responsible AI use in governance.

What is AI governance?

AI governance involves the application of rules, processes and responsibilities to maximize the value of automated data products while ensuring ethical practices and mitigating risks.

What is AI governance and why is it important

AI starts with trusted data. At Collibra, AI governance is an extension of our data governance efforts tested many times over with organizations around the world.

So what is AI governance?

AI governance involves the application of rules, processes and responsibilities to maximize the value of automated data products while ensuring ethical practices and mitigating risks. It’s crucial for federal agencies that want to leverage any AI use case, including generative AI.

Without proper governance and a clear understanding of the underlying data, deploying AI applications can lead to unwanted outcomes, such as model bias, inaccuracies, as well as legal, ethical and critical trust challenges. However, the benefits for federal agencies are substantial, including enhanced services, increased operational efficiency and optimized decision-making capabilities.

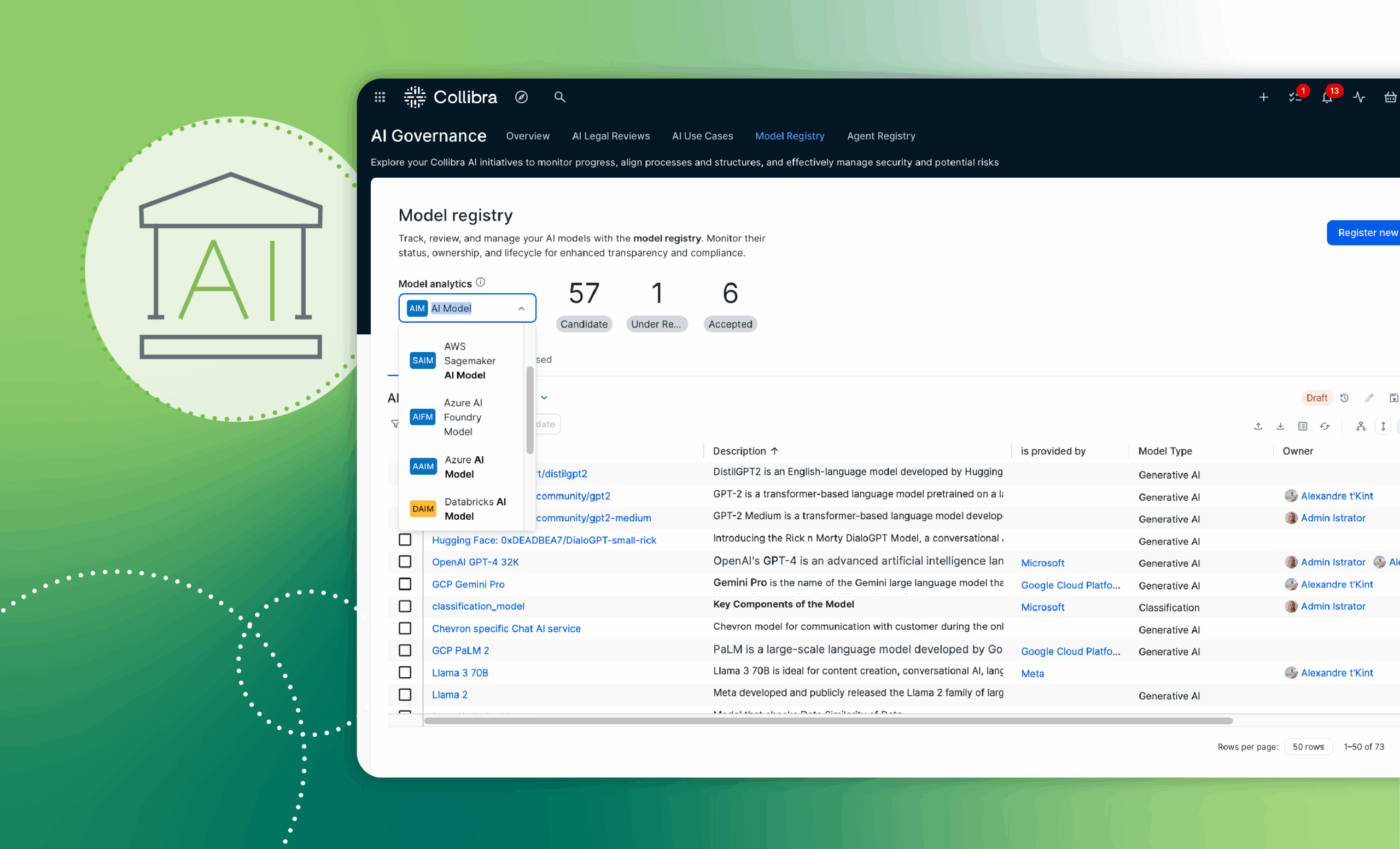

A FedRAMP certified software solution, Collibra AI Governance offers a range of benefits to federal agencies, including:

- More reliable AI: Catalog AI use cases with model cards, connect them to underlying data and continuously validate data reliability

- More compliant AI: Discover sensitive data classes and enforce data access policies to reduce legal and reputational risks

- More traceable AI: Examine and explore the lineage of all AI inputs and outputs, building externally referenceable AI model cards to instill trust

Learn more about Collibra AI Governance.

Key actions, deadlines and where Collibra can help

| Responsible Entity | Action | Section | Deadline | Collibra Can Help |

| Each Agency | Designate an agency Chief AI Officer and notify OMB | 3(a)(i) | 60 days | |

| Each CFO

Act Agency |

Convene agency AI Governance Board | 3(a)(ii) | 60 days | |

| Each Agency | Submit to OMB and release publicly an agency plan to achieve consistency with this memorandum or a written

determination that the agency does not use and does not anticipate using covered AI |

3(a)(iii) | 180 days and

every two years thereafter until 2036 |

✅ |

| Each CFO

Act Agency |

Develop and release publicly an agency strategy for removing barriers to the use of AI and advancing agency AI maturity | 4(a)(i) | 365 days | |

| Each

Agency** |

Publicly release an expanded AI use case inventory and report metrics on use cases not included in public inventories | 3(a)(iv),

3(a)(v) |

Annually | ✅ |

| Each

Agency* |

Share and release AI code, models, and data assets, as appropriate | 4(d) | Ongoing | ✅ |

| Each

Agency* |

Stop using any safety-impacting or rights impacting AI that is not in compliance with Section 5(c) and has not received an extension or waiver | 5(a)(i) | December 1, 2024 (with extensions possible) | |

| Each

Agency* |

Certify the ongoing validity of the waivers and determinations granted under Section 5(c) and 5(b) and publicly release a summary detailing each and its justification | 5(a)(ii) | December 1, 2024 and annually

thereafter |

✅ |

| Each

Agency* |

Conduct periodic risk reviews of any safety-impacting and rights-impacting AI in use | 5(c)(iv)(D) | At least annually and after

significant modifications |

✅ |

| Each

Agency* |

Report to OMB any determinations made under Section 5(b) or waivers granted under Section 5(c) | 5(b);

5(c)(iii) |

Ongoing, within 30 days of

granting waiver |

✅ |

What to do now

At Collibra, we understand the stakes are high for public sector data leaders to meet the requirements articulated in the OMB Memorandum.

Collibra AI Governance is a FedRAMP certified software solution that can help federal agencies meet specific requirements of the OMB memo, including cataloging, assessing and monitoring any AI use case for improved AI model performance and reduced data risk.

With Collibra by your side, navigating the complexities of AI governance becomes a clear and structured journey, ensuring that your agency meets the required standards—and, most importantly, thrives in an era of responsible and transformative AI utilization.

To further explore how Collibra can support your agency in adopting robust AI governance practices, we invite you to learn more about our solutions tailored for the public sector.

Learn more about Collibra AI Governance.