Wondering what you have missed at one of the world’s largest event dedicated entirely to data governance and information quality? Here is what you should know. The DGIQ event takes place every year at the Catamaran Resort and Spa Hotel, resting alongside the sunny Mission Bay area and the Pacific Ocean Beach in San Diego, CA. If you haven’t been there yet, then you should, go. It’s BEAUTIFUL out there, and with the conference, it’s a good mix between the learning and tropical weather. For the last week, the resort was filled with 555+ professionals including Chief Data Officers, Senior Data Governance Managers, Leaders from Analytics, Business Intelligence, Data Quality, Senior IT, and Data Architects. Many great speakers enlightened the attendees with case studies and a variety of subjects covering:

- How to get started with data governance

- How to be successful in data quality and data governance

- Agile data governance and data quality

- Data governance and data quality metrics

- How to overcome roadblocks in implementing data governance and data quality programs

- Governing the data lake

- Complying with GDPR and other regulations

- Governance, privacy and the Internet of Things

- Establishing data standards

- Best practices tips and takeaways

During the conference, I had the opportunity to meet with many data professionals, friends made over the years, and colleagues. One of the main themes of the conference was the “Trust” aspect of data. Meaning, how do we ensure the data we use for business is fit for purpose? The question itself says a lot about the progress we have made in the industry. Based on my observations at DGIQ, there’s fairly a large number of organizations who attended who are at the peak of their governance adoption lifecycle curve. Many excellent presentations were shared on best practices to start with data governance and stewardship and other phases of data governance that follow. It reminds me about my previous interactions with customers at the Collibra Data Citizens conference and how a technology platform can play a fundamental role in turning the learned best practices into an action and an accomplishment for the organization itself.

One of the keynote speakers, our very own co-founder and CTO Stan Christiaens, shared a new perspective on data governance. He talked about a paradigm shift in the space where organizations can drive value by taking data governance to an enterprise level, which allows companies to think about the following:

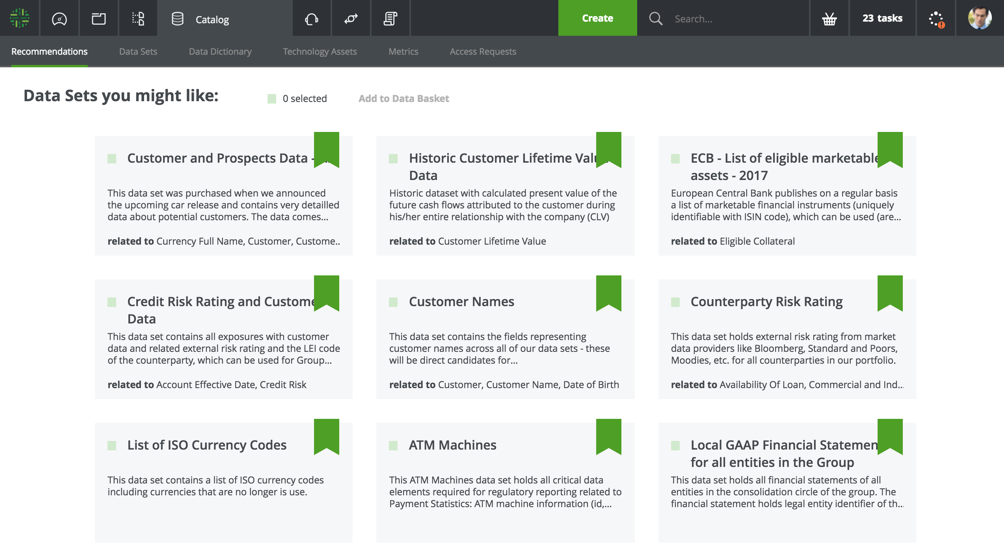

1. Centralized Data Cataloging

A single source for data citizens to find, understand, and trust data. In fact, studies show that even the data professionals spend as much as 70% of their time simply searching for the right data. That’s frustrating for them and expensive for many organizations.

Here is a sample:

Figure 1 Data Catalog

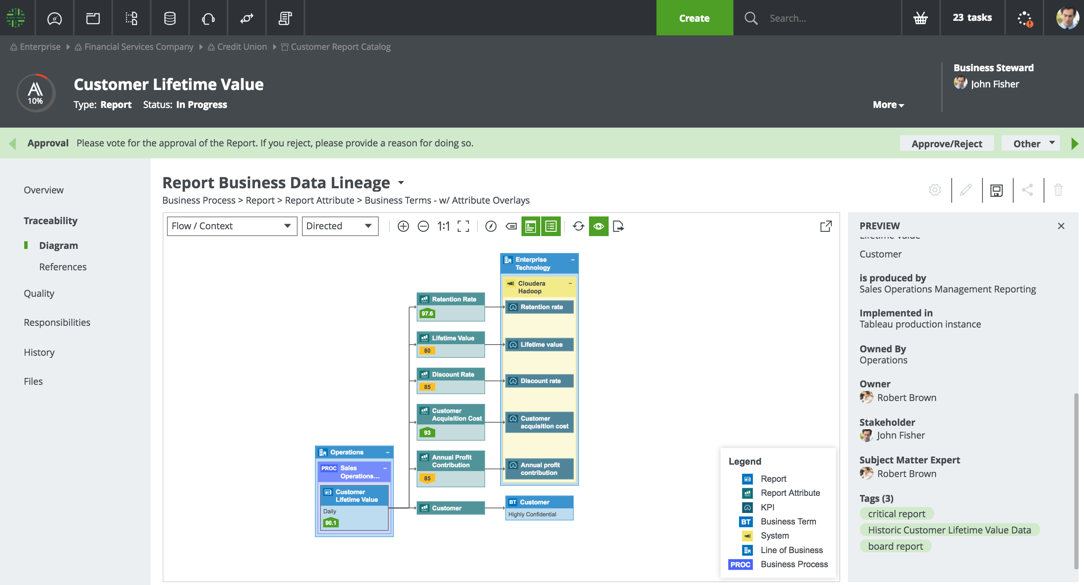

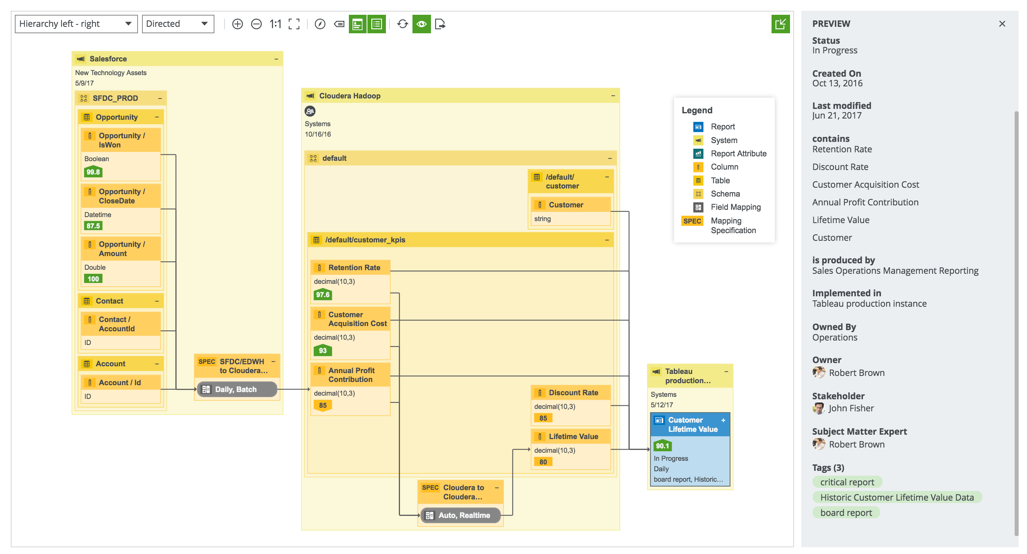

2. Data Lineage, Alerting, and Machine Learning

At DGIQ, for many, especially the ones on the journey of being data driven, data lineage is THE most important requirement for data governance. It allows data citizens to understand how data flows across the various business processes, systems, and applications. Though everyone is thinking about data lineage, very few are automating it for data governance. Meaning, by updating data lineage automatically during a change (e.g. data dictionary) detected from a data mart, an exception review processes should automatically be triggered. A subject matter expert can be asked to map the new identified change to a proper business context (e.g. new column “cust_account” is defined as “customer account is a number for uniquely identifying US customers”). Much of this process can be manual when we look at large data sets. However, by introducing machine learning and automation into change management processes, users will automatically get meaningful suggestions. And that means they spend less time picking and choosing the right definitions for data.

Here is a sample data lineage:

Figure 2 Business Lineage

Figure 3 Technical Lineage

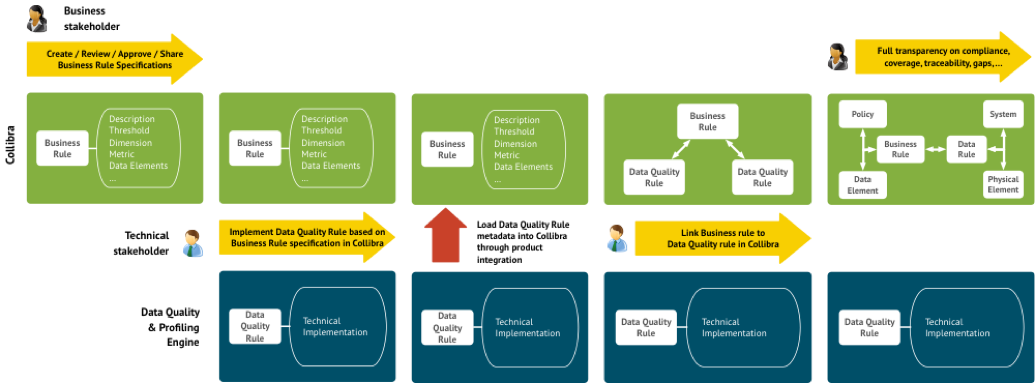

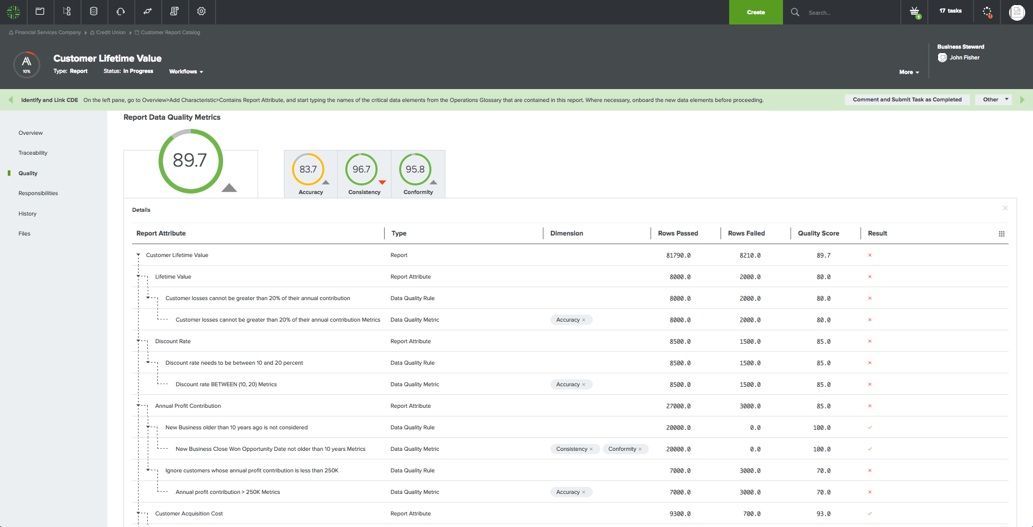

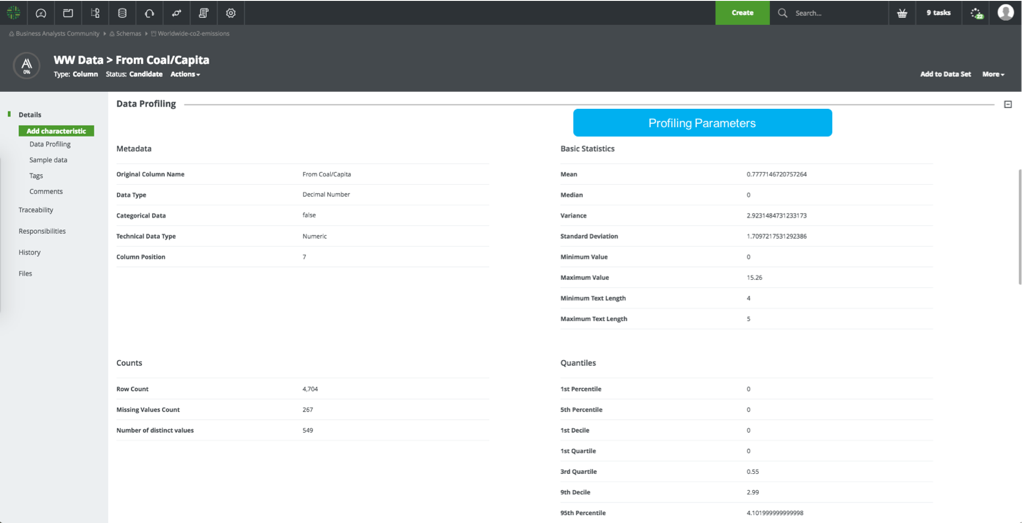

3. Data Quality & Data Profiling

Data quality is one of the key drivers for data governance. It enables business users to collaborate when the quality of data falls below the standard. Also, data governance drives data quality (also a key topic I presented during Collibra Citizens Conference) by setting policies, standards and rules on the business level and linking these rules to data quality metrics coming from data quality tools. Here is sample outline of the business rule definition and data quality rule implementation process outline below:

Figure 4 Data Quality Process

And, a sample data quality dashboard (provides trust in data by placing a percentage of data):

Figure 5: Data Quality Dashboard

Figure 6 Data Profiling

All the above will enable data stewards, data analysts, and data scientists to work as a force while benefiting from a trusted source of information. The power of having fit-for-purpose data is beneficial to those organization who wants to stay ahead of the curve. But the big question is, what data we can trust today?